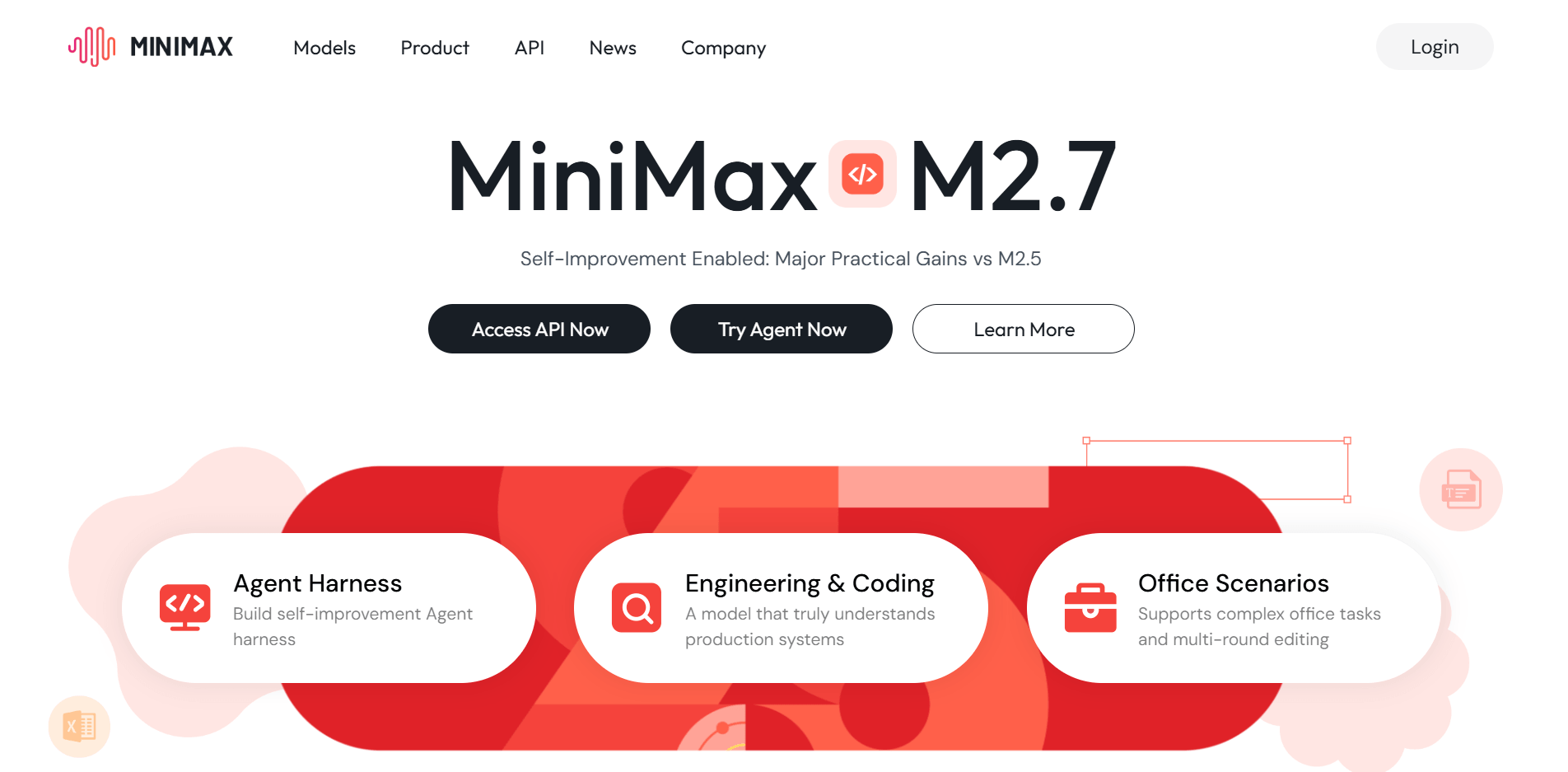

MiniMax has spent the last two years quietly building a general-purpose model stack for Asian super-apps, creators, and enterprise copilots. The new MiniMax M2.7 release is the clearest signal yet that the company wants to play in the same class as GPT-5.4 and Claude Opus, not just as a voice tool. Beyond the flashy "self-evolving" headline, M2.7 brings measurable upgrades in software engineering, office productivity, and agent execution benchmarks—all while undercutting rivals on price. This guide distills the model's architecture, benchmarks, cost structure, and implementation patterns so you can decide whether to plug it into your MiniMax Agent workspace, an OpenClaw agent fleet, or a hybrid stack.

TL;DR

- Self-evolving training: M2.7 orchestrated 100+ rounds of scaffold optimization on itself, netting a 30% internal performance bump and 97% skill adherence across 40+ complex workflows.

- Benchmarks that matter: 56.22% on SWE-Pro, 1495 ELO on GDPval-AA office tasks, and 86.2% on PinchBench place it near Claude Opus and GPT-5.4 in agent scenarios.

- Cost advantage: $0.30 / 1M input tokens and $1.20 / 1M output tokens via the MiniMax API makes it one of the most affordable frontier models.

- Agent-first tooling: MiniMax Agent, Talkie, and enterprise APIs expose M2.7 as a drop-in backend for research copilots, DevOps bots, and knowledge workers.

- Rollout plan: Treat M2.7 as a specialization tier—prototype with long-memory copilots, benchmark against your GPT stack, and route workloads where it wins on price/performance.

MiniMax M2.7 at a Glance

- Model family: Proprietary multimodal foundation model covering text, speech, video, image, and music (MiniMax official site).

- Mission focus: Co-create intelligence with everyone—MiniMax positions itself as an AGI-era platform company serving 20+ countries and 214K+ enterprise clients (MiniMax corporate overview).

- Product line: M2.7 anchors MiniMax Agent, Hailuo Video, MiniMax Music 2.5+, Speech 2.6, and token packages aimed at developers building long-context agents.

What “Self-Evolution” Actually Means

Step | How M2.7 Uses It | Why Builders Care |

Memory updates | Model edits its own long-term memory based on reinforcement results | Reduces drift when agents operate over ultra-long contexts |

Skill bootstrapping | Builds RL harnesses, generates datasets, tests harnesses autonomously | Frees human researchers from repetitive scaffolding |

Scaffold optimization | 100+ rounds of iterative evaluation → 30% internal gain (VentureBeat coverage) | Delivers real SWE-Pro uplift vs. M2.5 without manual babysitting |

Human-in-the-loop checkpoints | Researchers approve major skill changes | Keeps compliance and product teams in control |

Benchmark Snapshot (March 2026)

- SWE-Pro: 56.22% (approaches Claude Opus territory for software delivery). M2.7 can handle log ingestion, multi-signal debugging, and ML pipeline edits.

- GDPval-AA (office suite ELO): 1495—the highest publicly shared score among non-flagship models, powering Excel/PowerPoint co-editing.

- PinchBench (agent execution): 86.2%, trailing only GPT-5.4 and GLM-5, but exceeding Gemini 3.1 in many open-world agent tasks.

- Self-evolution competitions: 66.6% medal rate across 22 ML contests, tying Gemini 3.1 for experiment agility.

Why it matters: SWE-Pro and PinchBench correlate with end-to-end delivery—if your stack relies on complex instructions plus tool use (observability, CI/CD, doc editing), M2.7 can sit behind an OpenClaw agent without rewriting your harness.

Pricing & TokenPlan

- API list price: $0.30 / 1M input tokens & $1.20 / 1M output tokens (MiniMax TokenPlan page).

- Enterprise bundles: Multi-seat packages inside MiniMax Agent give ops teams predictable spend while piggybacking on the same weights.

- Third-party access: OpenRouter and other aggregators mirror the same pricing tier, making it easy to swap M2.7 into existing router policies.

Implementing M2.7 in an OpenClaw Stack

- Long-context copilots: Use M2.7 for log triage agents that require 200k+ tokens, while keeping GPT-5.4 for natural language finesse.

- Office workflow bots: Route PPT/Excel heavy workflows (budget models, quarterly decks) to M2.7’s GDPval-AA tuned capabilities.

- Knowledgebase agents: Pair M2.7 with MiniMax’s memory API or OpenClaw PinchDB to keep 97% skill adherence over 40+ commands.

- Cost-aware routing: Build a policy that downgrades to M2.7 when prompts exceed 20k tokens or when cost-per-experiment dominates latency.

Comparison vs. Other Frontier Models

Capability | MiniMax M2.7 | Claude 5.2 Opus | |

SWE-Pro | 56.22% | ~58% | ~56% |

PinchBench | 86.2% | 90%+ | 84% |

Office ELO | 1495 | 1450 | 1470 |

Pricing (input/output) | $0.30 / $1.20 | $0.60 / $2.40 | $0.80 / $3.20 |

Agent skill adherence | 97% | 95% | 93% |

Takeaway: GPT-5.4 still leads on pure reasoning, but MiniMax M2.7 narrows the gap in engineering and office verticals while halving costs.

Rollout Roadmap for Builders

- Week 0–1: Evaluate API ergonomics. Mirror your best GPT prompt flows inside the MiniMax Agent console; log token usage and latency.

- Week 2–3: Rebuild SWE-Pro style harnesses with automated regression: unit tests, repo diffs, telemetry dashboards.

- Week 4: Pilot in one production agent (observability triage, finance modeling, localization QC). Set guardrails for context windows, concurrency, and fallback models.

- Week 5+: Expand to multi-agent orchestrations (OpenClaw PinchBench suite) where M2.7’s self-evolving scaffolds lower maintenance.

Your AI Receptionist, Live in Minutes.

Scale your front desk with an AI that never sleeps. Solvea handles unlimited multi-channel inquiries, books appointments into your calendar automatically, and ensures zero missed opportunities around the clock.

FAQ

Q: Does MiniMax M2.7 support multimodal input?

A: Yes. MiniMax positions M2.7 within a full-stack matrix that spans text, speech, video, image, and music, similar to the capabilities powering Hailuo Video and MiniMax Music 2.5+.

Q: Is the model open weight?

A: No. MiniMax keeps core weights closed but exposes APIs, enterprise hosting, and MiniMax Agent packaging.

Q: How do I access the token plan?

A: Request access via the MiniMax TokenPlan portal; developers typically get API credentials plus discounted usage blocks for long-context experiments.