Alibaba’s Wukong AI agent moved from an impressive R&D showcase to a production-ready automation layer inside DingTalk by 2026. CIOs now weigh it against open frameworks such as OpenClaw to decide which assistant stack can handle multilingual workflows, AI safety rules, and regional compliance. This guide clarifies the differences, shows how DingTalk’s native integrations supercharge Wukong, and lays down an implementation blueprint fit for regulated enterprises.

The article also traces how DingTalk AI Agent 2026 redefines governance, why the Wukong vs OpenClaw debate hinges on control versus speed, and what practical KPIs leadership teams should monitor after rollout. You will find a mix of concise TL;DR bullets plus narrative paragraphs so that strategy decks and long-form readers both get what they need.

TL;DR / Key Takeaways

- Wukong + DingTalk = turnkey: Pre-built connectors, policy guardrails, and China-enterprise compliance baked in.

- OpenClaw excels at extensibility: Superior plug-in ecosystem and on-prem control, but requires heavier ops.

- Decision point: choose Wukong when you need rapid rollout inside DingTalk, choose OpenClaw when you demand hybrid cloud/on-prem orchestration.

- Roadmap: Start with DingTalk’s AI Agent 2026 sandbox, run dual pilots (Wukong vs. OpenClaw), standardize evaluation metrics (latency, hallucination rate, governance fit).

1. Alibaba Wukong AI Agent in 2026: What’s New

Wukong’s latest release turns the agent into an orchestration brain rather than a single bot. Alibaba tied together DingTalk chat signals, document understanding, and IoT telemetry so that one agent can read a supplier contract, understand a field photo, and trigger procurement approvals within seconds. This end-to-end context window dramatically reduces manual handoffs and explains why frontline teams are piloting the tool in manufacturing, logistics, and retail branches simultaneously.

- Context-aware automation: Wukong now interprets DingTalk chat intent, Lark-style docs, and IoT telemetry simultaneously.

- Guardrail studio: Policy templates covering data residency, HR workflows, and finance approvals reduce custom coding by ~35% (Alibaba Cloud Summit 2026 keynote).

- Multimodal co-pilots: Embedded image-to-text and spreadsheet reasoning enable frontline teams to convert field photos into procurement tasks.

2. Deep Dive: DingTalk AI Agent 2026 Stack

DingTalk’s 2026 uptake is more than a chat wrapper—it is a full AI fabric layered over collaboration, mini-apps, and enterprise controls. Administrators can drag connectors onto a workflow canvas, specify who can invoke a particular prompt chain, and audit every hop without leaving the Admin console. The result is a governed environment where business teams can experiment without exposing the organization to prompt injection or data leakage risks.

- Workflow graph: DingTalk stitches chat triggers, Wukong reasoning nodes, and mini-app actions via visual DAGs, making complex automations legible for non-developers.

- Security posture: Default SOC 2 mappings, ZTA-based device trust, and audit-ready logs exported to Alibaba Cloud SIEM keep compliance teams in the loop.

- Ops console: Real-time latency dashboards, automated rollback for rogue prompts, and prompt versioning down to individual teams shrink mean time to repair.

- Localization: Mandarin, English, Japanese intent packs plus regional transliteration tables accelerate cross-border subsidiaries.

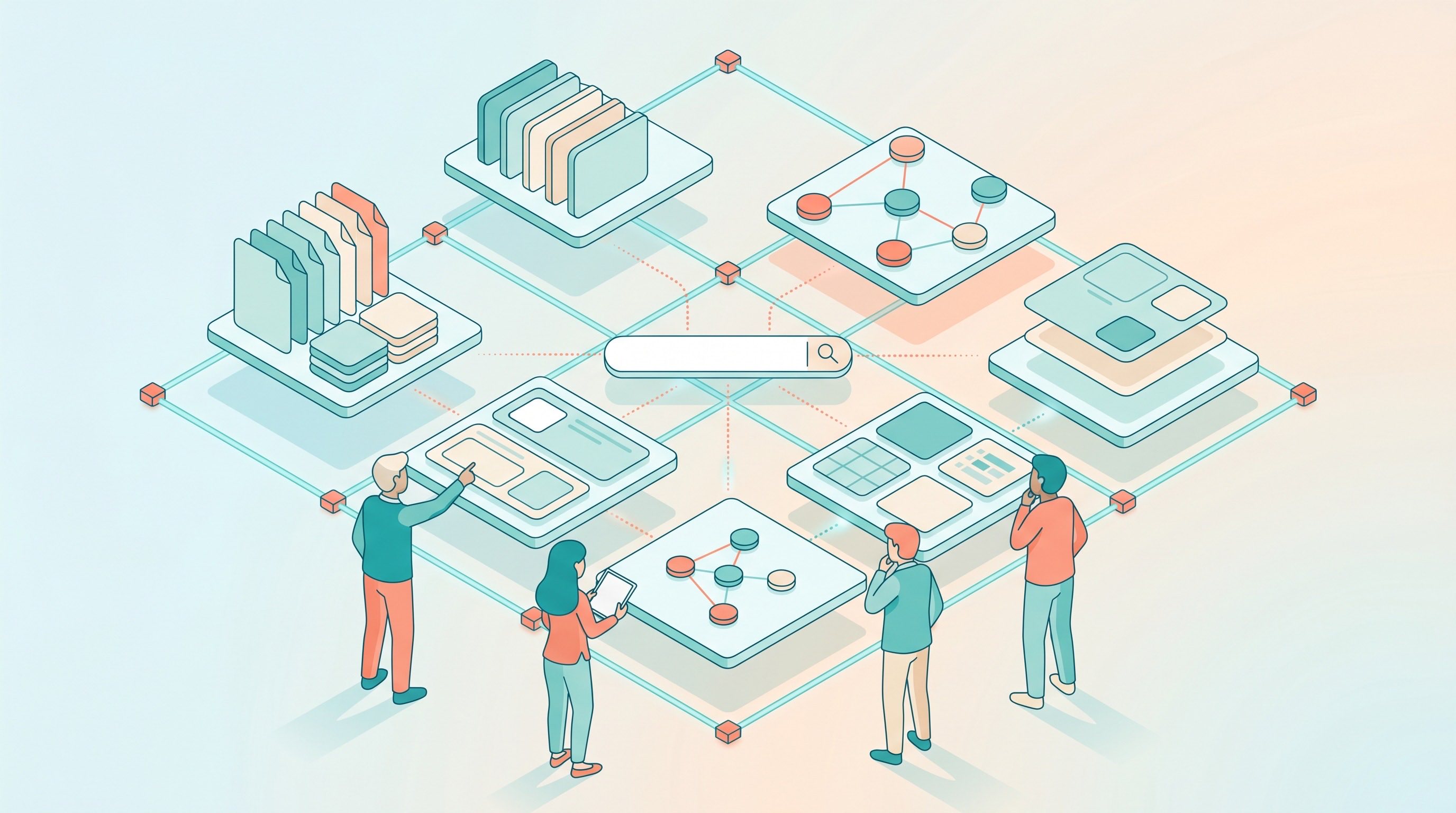

3. Wukong vs OpenClaw: Capability Comparison

Enterprises rarely choose a platform on features alone; they care about who controls the stack, where data lives, and how fast teams can experiment. Wukong’s strength lies in its native DingTalk integration and regulatory alignment within China and broader APAC markets. OpenClaw, by contrast, offers an open toolbox that can sit anywhere—from private cloud to on-prem nodes—ideal for platform teams who need to orchestrate agents across multiple messaging surfaces.

Capability | Alibaba Wukong + DingTalk | OpenClaw |

Primary strength | Native enterprise automation inside DingTalk ecosystem | Highly customizable, open framework for multi-channel agents |

Deployment speed | Fast—pre-built HR/finance templates; drag-and-drop flows | Medium—requires skill installation and custom routing |

Compliance | China-specific data residency + enterprise policy kits | Strong for global orgs; flexible hosting (cloud/on-prem) |

Extensibility | Focused on Alibaba Cloud + DingTalk connectors | Broad plugin, multi-surface messaging, cron/heartbeat automations |

Governance | Centralized DingTalk admin center | Granular but manual (skills, cron jobs, IAM rules) |

Ideal buyer | DingTalk-centric companies needing turnkey AI | Platform teams prioritizing open tooling + hybrid infra |

In practice, many organizations run a hybrid: Wukong handles DingTalk-native workflows while OpenClaw covers external channels such as email, WhatsApp, or field devices connected through cron-based heartbeats. We have also written guides comparing other tools, like NanoClaw and KiloClaw.

4. Implementation Blueprint for Enterprises

Successful rollouts follow a phased approach that balances experimentation with governance. Start with a two-week assessment sprint to discover the top ten workflows that cause the most manual drag—HR onboarding, procurement approvals, support triage, and finance reconciliations tend to surface first. Map the sensitivity of data in each workflow to decide whether it stays entirely inside DingTalk or needs OpenClaw’s hybrid routing for external channels.

During Weeks 3–6, run a dual pilot. Use the DingTalk AI Agent sandbox to deploy Wukong templates while mirroring the same flows in OpenClaw, leveraging cron jobs and skills for parity. Instrument both pilots with shared metrics dashboards tracking latency, intervention rate, human override count, and policy violations. This ensures leadership compares like-for-like results rather than anecdotal wins.

Week 4 onward is about policy and guardrail design. Adopt DingTalk’s guardrail studio for built-in workflows, then mirror those guardrails in OpenClaw using skill-vetter, node-connect, and cron safety routines. As you move into Week 8 and beyond, establish a Center of Excellence that owns prompt libraries, test harnesses, fallback procedures, and training. This governance muscle prevents shadow AI deployments from sprouting across business units.

5. Governance, Compliance, and ROI Metrics

Post-deployment success hinges on relentless measurement. Aim for sub-1.5-second response times in DingTalk chats; OpenClaw automations running through cron or heartbeat loops should stay under two seconds to feel real-time. Keep hallucination rates below three percent for HR and finance workflows by running weekly red-team prompts and recording remediation steps. Adoption is the simplest north star—if 70 percent of targeted workflows are automated within 90 days, the rollout has earned user trust.

Financial metrics matter too. Compare baseline manual handling costs (hours per request, FTE load) with the combined licensing, compute, and operations cost of Wukong plus any OpenClaw nodes. Most 5,000-seat organizations see payback within six to nine months once high-volume tasks migrate.

- Latency ceiling: <1.5 s in DingTalk chat; <2 s for OpenClaw cron/heartbeat automations keeps experiences smooth.

- Hallucination rate: <3% via weekly adversarial tests and per-flow guardrails.

- Adoption KPI: ≥70% of targeted workflows automated after 90 days validates product-market fit.

- ROI model: Manual cost vs. platform + ops spend, typically reaching payback in 6–9 months for 5k-seat orgs.

6. Outlook: Where Wukong Heads Next

Alibaba has signaled that Wukong will push deeper into verticalized copilots fueled by domain data. Manufacturing and logistics packs already stitch IoT readings with procurement triggers, and financial services templates are in limited preview. Expect the platform to expose more REST hooks so Wukong can call neutral platforms such as ServiceNow or Workday without forcing data to leave regulated zones.

- Industry-specific copilots: Manufacturing, logistics, and retail packs align prompts with real sensor data.

- Cross-platform compatibility: Upcoming REST hooks let Wukong orchestrate third-party systems while preserving DingTalk governance.

- AI safety: Federated fine-tuning keeps sensitive examples local, reducing regulatory exposure and building trust with compliance teams.

Your AI Receptionist, Live in Minutes.

Scale your front desk with an AI that never sleeps. Solvea handles unlimited multi-channel inquiries, books appointments into your calendar automatically, and ensures zero missed opportunities around the clock.

FAQ

1. What makes Alibaba’s Wukong agent different from generic LLM copilots?

Wukong pairs DingTalk intent streams with Alibaba Cloud governance, so workflows, guardrails, and telemetry live in one admin console instead of scattered point tools. That unified control plane is why regulated enterprises can sign off on deployments faster than they can with piecemeal bots.

2. Can I run Wukong and OpenClaw together?

Yes. Many enterprises deploy Wukong for DingTalk-native work while using OpenClaw for external channels (email, WhatsApp) or on-prem tasks that require custom cron jobs. Keeping both in play lets you use Wukong’s compliance presets without giving up OpenClaw’s extensibility.

3. How does DingTalk AI Agent 2026 handle compliance reviews?

It exports immutable audit logs, ties guardrail policies to user/device trust scores, and supports per-department approval matrices so legal teams can attest to controls quickly. Auditors can replay every agent action, which dramatically shortens quarterly review cycles.

4. What KPIs prove the rollout works?

Track time-to-resolution, agent escalation rate, average response latency, and policy violation alerts. Layer in qualitative feedback from pilot teams, and you will spot when adoption stalls long before executive dashboards turn red.